FHL Vive Center

Duration: 8 months

Industry Capstone

Team: 1 PM, 3 Designers, 4 Engineers

My role: Lead UX researcher

Context

Ursa is a voice rover control system designed to make human–robot interaction intuitive and reliable. Built in collaboration with Qualcomm and NASA, it uses large language models to translate natural language into ROS commands, allowing users to control a rover naturally.

My role: As the UX research lead, I led contextual studies, defined key design opportunities, and created new interaction patterns for the rover’s voice interface from 0 to 1.

Outcome: We designed three LLM features that made navigation faster and smoother, cutting average task time by 7 seconds while improving user confidence in system responses. We secured >10K funding from sponsors.

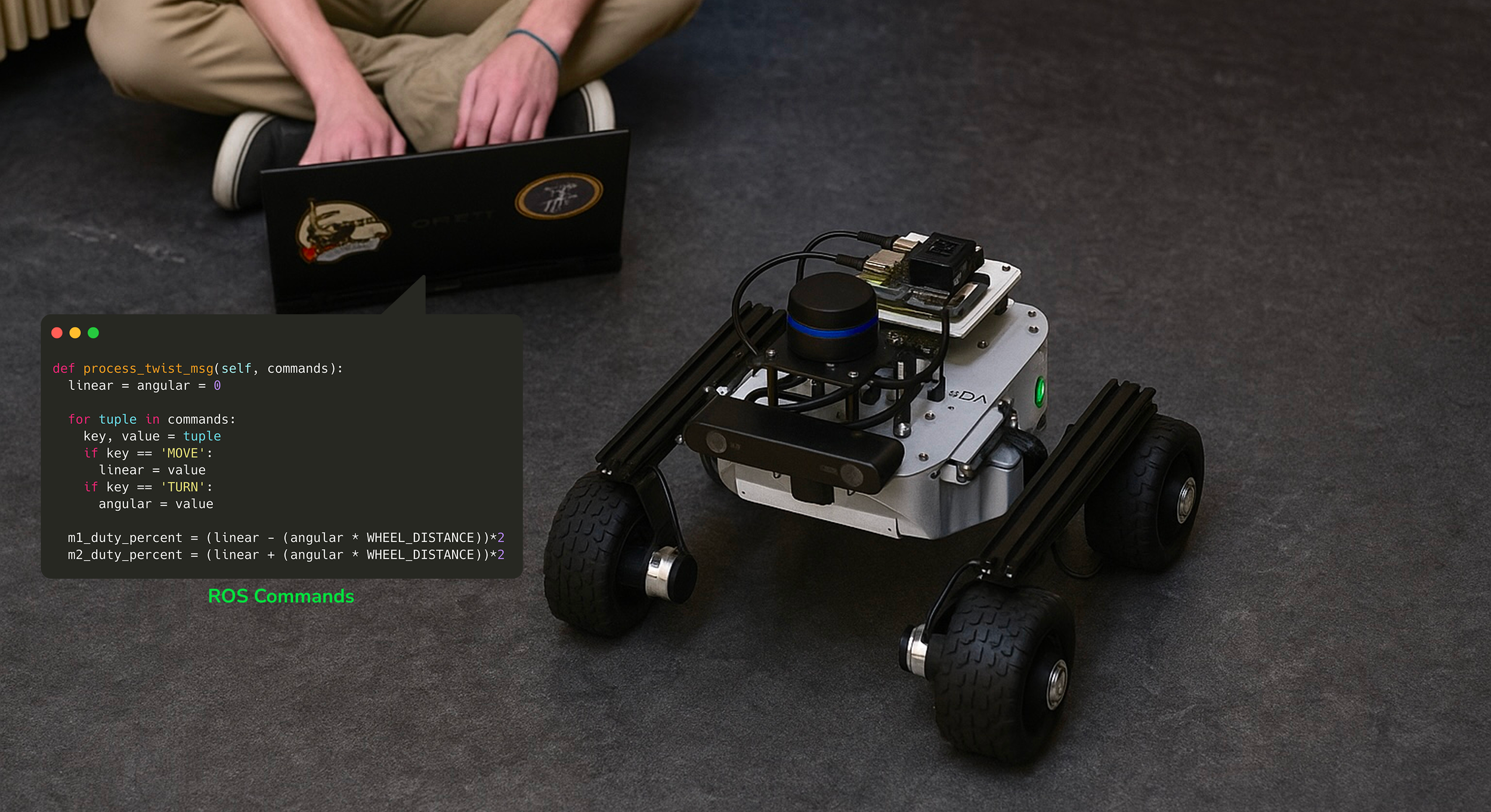

Problem

The goal of this project was to explore a new way for users to control a space rover. When we started the project, there wasn’t yet an interface.

— only a set of ROS(Robot Operating System)commands that engineers used to control the rover.

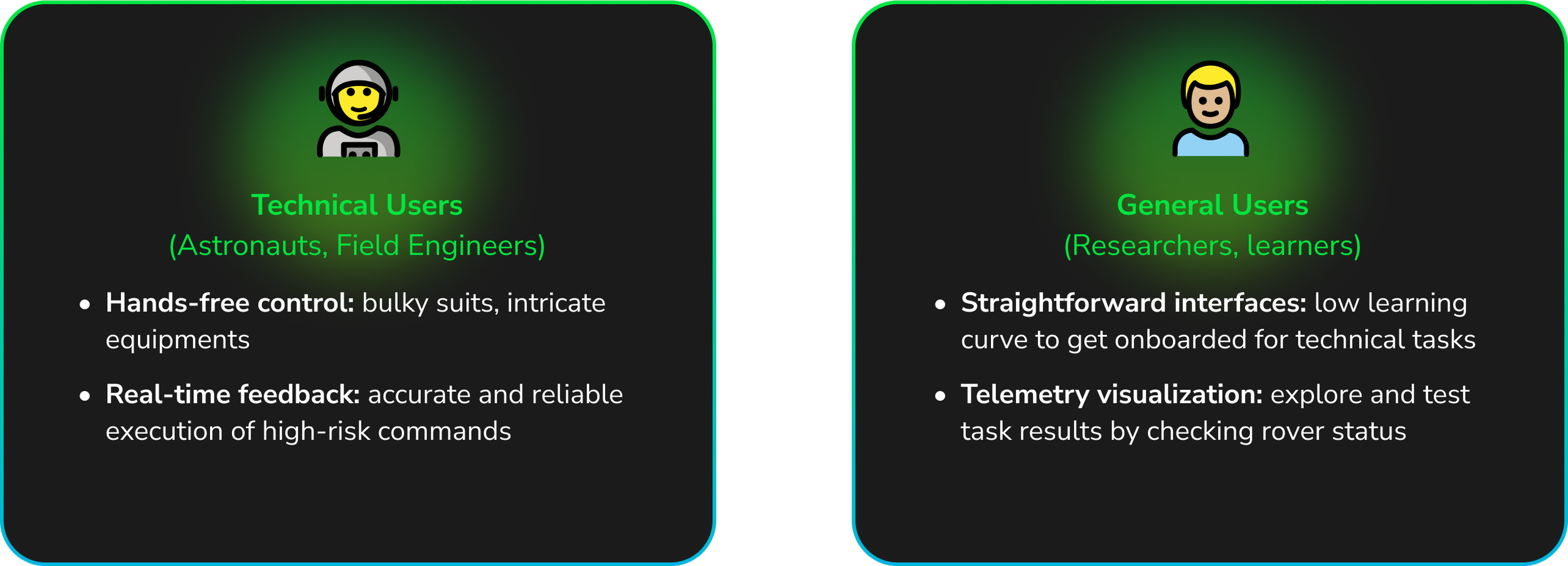

Through early contextual studies, we defined two core user groups:

Technical users who needed precision, feedback, and control in high-stakes environments.

General users who needed an intuitive way to explore and test without learning a programming language.

For astronauts and engineers, ROS commands meant juggling complex syntax under pressure. For general users, it meant the rover was practically inaccessible without technical knowledge.

This led to our central question:

How might we design a rover control system that transforms technical commands into a human-cented, trustworthy experience?

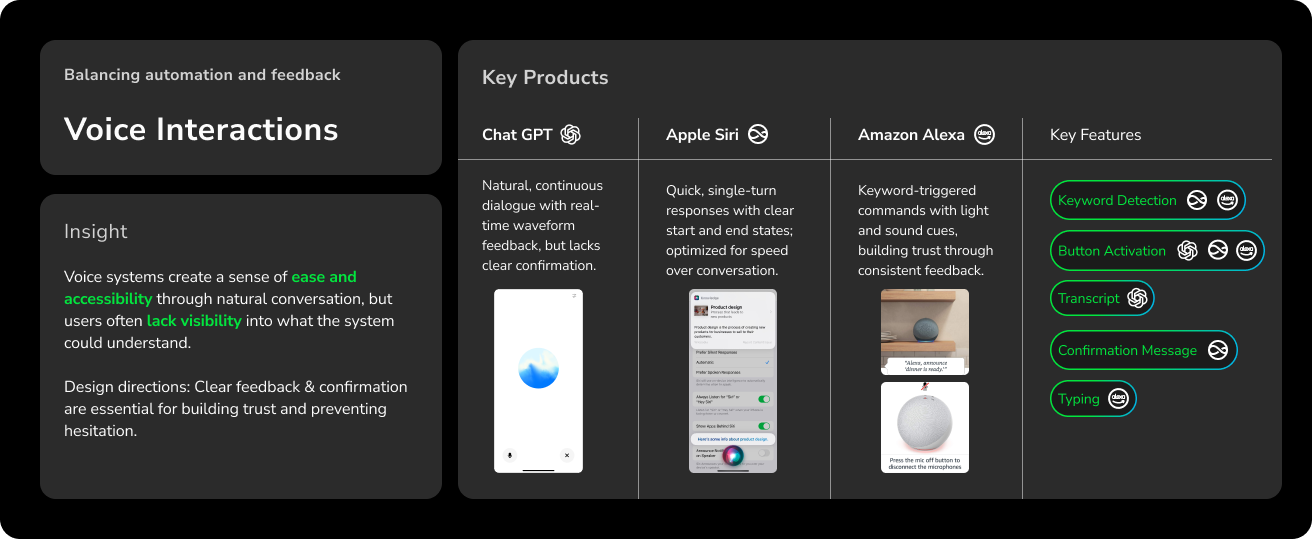

Interaction Pattern Analysis

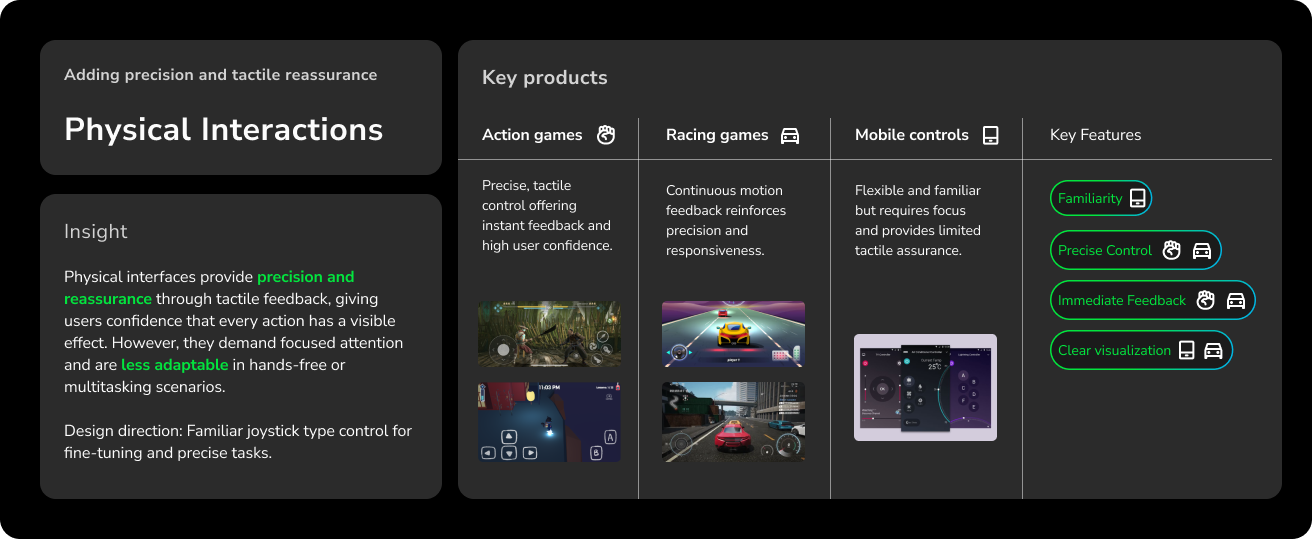

Before defining how users would talk to or control the rover, I conducted an interaction pattern analysis to explore how people already communicate with intelligent systems.

Together, these examples revealed a tension between freedom and control:

Voice interfaces enable hands-free exploration but risk ambiguity.

Physical controls ensure accuracy and feedback but demand constant attention.

The ideal rover interface wouldn’t imitate a single system: it would combine the ease of conversation with the confidence of control.

Ideation

Interaction Model

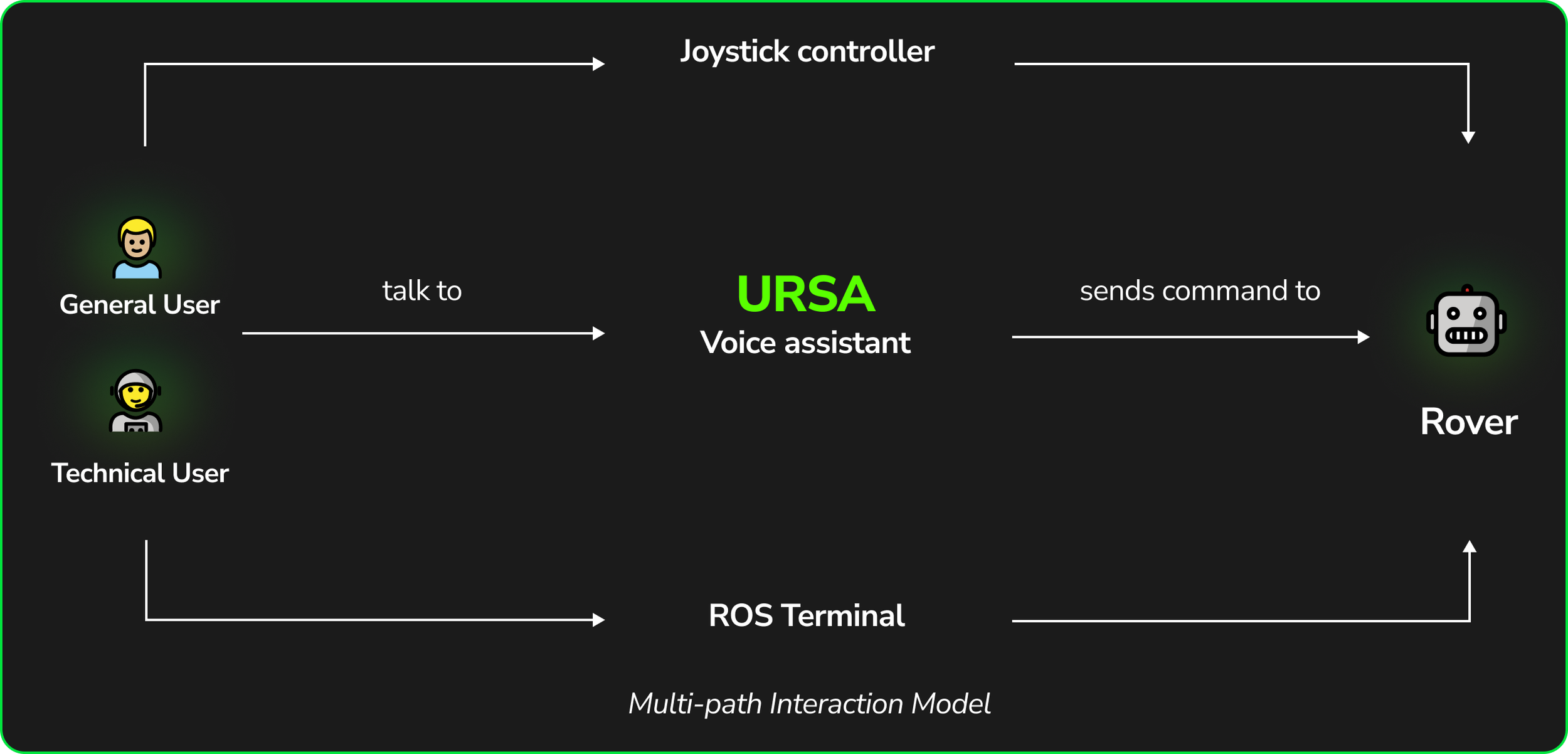

Users have 3 ways to command the rover. Depending on the complexity of the task, they have the autonomy to choose how to complete it.

User Flow

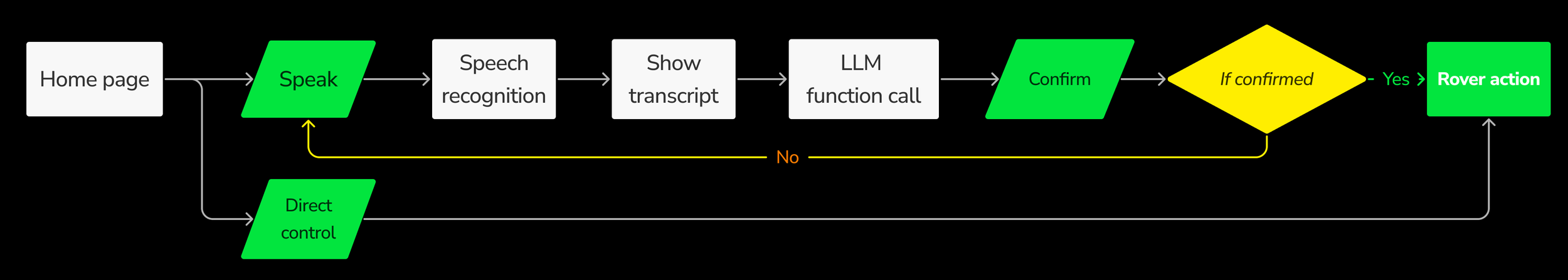

I designed a “Confirm Before Act” flow to build trust in automation. This pattern caught errors early and gave users confidence that nothing would happen without their consent.

Usability Test

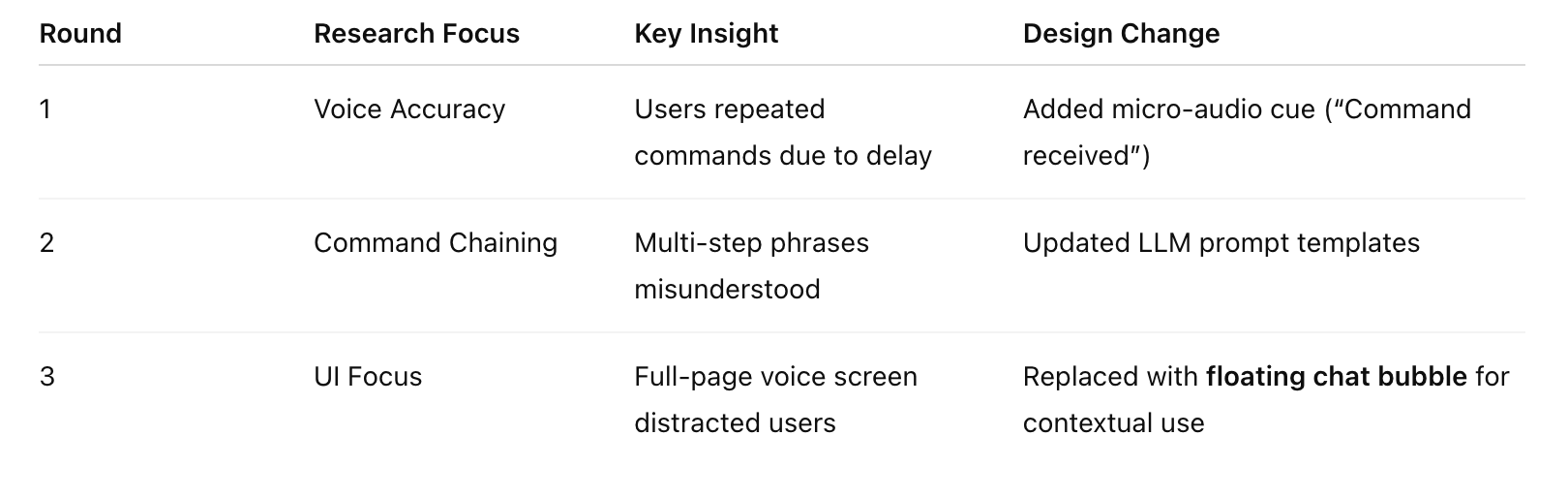

Across 3 rounds of usability testing, I iterated on the interaction model and interface hierarchy.

Outcome

1 Voice control

Designed for hands-free efficiency, the voice interface allows users to issue natural-language commands without touching the screen.

The system parses speech into ROS-compatible instructions in the back end, providing instant confirmation before executing actions.

2 Joystick Control

The joystick provides a direct and intuitive fallback for movement and fine adjustments. By mirroring familiar interaction patterns, it lowers the learning curve and ensures users can always intervene manually when voice input is unreliable.

This mode is designed for both astronauts and general users who prefer tactile feedback or need precise control during navigation.

3 ROS Terminal

For advanced users and developers, the ROS terminal offers full manual access to system-level commands. It allows direct debugging, testing, and functional-calling with real-time telemetry feedback.

This mode gives engineers and researchers flexibility and transparency, maintaining the robustness of traditional control while integrating with the new interface.

Outcome

Project presentation to Qualcomm

Learnings

1. Designing from behavior, not technology.

At first, it was tempting to focus on what the LLM could do. But through observation and mapping user intent, I learned that effective AI design starts with human expectations, not system capabilities. The conversation patterns shaped the technology, not the other way around.

2. Balancing freedom and control.

Every design decision came back to a single question: When should the user lead, and when should the system assist?

By combining voice, joystick, and terminal, we created layered control that empowers users to switch between trust and precision depending on context.